Have you lately been noticing public intellectuals, whom you deemed decently rational, suddenly turning tribal? It could be the result of an algorithm induced hypnosis.

Hypnosis isn’t like brain-washing. I don’t even honestly know what brain-washing is. But hypnosis is mostly understood as a state wherein the subject is perfectly conscious, not under any influence, yet makes the moves that you (the “hypnotizer“) intend them to make even before their mind can mentally traverse the search space, understand it and predict implications. It’s neither the condition of being a sleep-walker, wherein the subject gives incoherent explainations of their present state, nor of being indoctrinated. Something in between.

Twitter, and other community networking platforms, increase consumption and usage metrics by hijacking attention and subjecting your mind down a funnel of labels. Weapon of choice here is a recommendation engine i.e. machine learning models which predict the next most preferred piece of content to throw at you to increase your likelihood of continuous scrolling. Conversely, minimizing your chances of exit from doom-scrolling.

An unwanted (or intentionally wanted?) outcome of these models is to keep knocking your mind with next closest label or reference subject to the current piece of content you are consuming.

The problem, however, is that most humans synthesize their mental models based on emotional references and labels. And aren’t agentic enough to rationally predict the next branches of their “created“ worldview with just pattern-level abstraction. Therefore, these recommendation engines can, and do, corrupt your mental models. But how do they achieve that influence?

Learning is the ability to implement an already taught solution to a specific set of problems.

Deep learning is the ability to find a solution to a specific set of problems.

Meta learning is the ability to learn.

Recommendation engines used by twitter, and others, are mostly at the second stage for now. To make machines deep learn, we need to train a function that whence implemented on the input state, takes us to the desired output state.

We start with an arbitrary (randomly initialized) blackbox function and optimize that function to produce as accurate outputs for a given set of inputs as much as possible. Loosely put, instead of ready-aim-fire, we do ready-fire-aim.

If you’ve read my post on building agency in machines, then you know that neural networks stand tall as one of the most trustworthy function optimizers, and are today responsible for everything from self-driving cars to GPT-3.

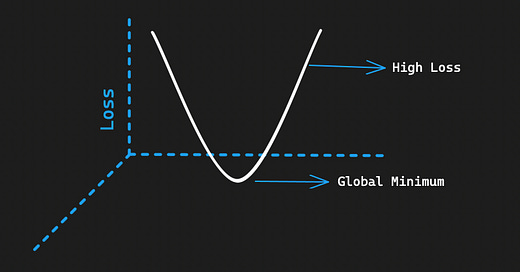

In other words, they try to calculate the future from the past by minimizing the difference (aka “loss”) between their calculated future and the one that we wanted.

One of the most popular algorithms used to optimize functions is stochastic gradient descent. The idea is to continuously keep updating the patterns of our our blackbox/function at the end of every iteration with the resulting delta between it’s calculated output (content labels, in our case) versus the expected output. Once it is obvious and visible that this delta is at its lowest, or it is simply not going further down - we stop training the function.

This is how most modern AIs learn and improve. Because this technique relies on reducing or lowering the difference, also called “loss“ or “gradient,” this algorithm is defined as “gradient descent.”

Needless to mention techno-ideological overlords (read: content-moderation teams) at these companies can also run these models to push your mind down a pre-selected funnel of labels and hijack your opinions until you hit an ideological rock bottom. Engineers can authorize new collective norms and upgrade them to collective illusions.

From hereon, It’s easy money down the road.

By launching targeted advertisements subject to your browsing history.

By offering opposing parties to alter 1% of the global opinion. Which is, needless to mention, A LOT.

By offering to alter just your (targeted) opinion.

All the aforementioned methods can attract fat cheques.

If Twitter can attention-jack and launch mimetic concepts on your political mind by curating text on your timeline, Netflix can do it to videographics.

The abused word “echo chamber” is a misnomer. It implies that there is a way on these platforms for you to stay/come out of your echo chamber. Perhaps by following personalities inhibiting conflicting mental models. And using that new network as a yardstick to remain as objective as possible. When, in fact, crafting and altering your network of choicest personalities will only take you from one echo chamber to another ad-hoc generated one.

The higher is the distance between global minimums of two echo chambers, the higher is tribalism in society.