Building Agency In Machines

And what does it mean to be Sentient?

If you are a regular to this blog, you are already aware of my computational view of the universe. If you aren’t, I highly suggest you read at least two of my previous posts. The best ones are on free will and how to talk to your god.

In our purely computational universe, agency can be defined as the capability of an agent to control its future to the best accuracy of its betting algorithm.

To control the future, you need to create a model of the future. A world that is causally different from the world that you currently live in. Let’s just say, your hypothetical universe.

And to synthesize such a simulation, you need a turning machine. Even the simplest agents in the universe will always have a turning machine. One such system that we know of is the cell. Cells literally have a read-write tape in them.

To model agency in detail, I’ll have to give a quick overview of at least two mathematical models (sorry).

The infamous Neural Networks, which are today responsible for everything from self-driving cars to the latest GPT-3, stand tall as one of the most trustworthy function optimizers. In other words, they try to calculate the future from the past by minimizing prediction error. But what is “prediction error?”

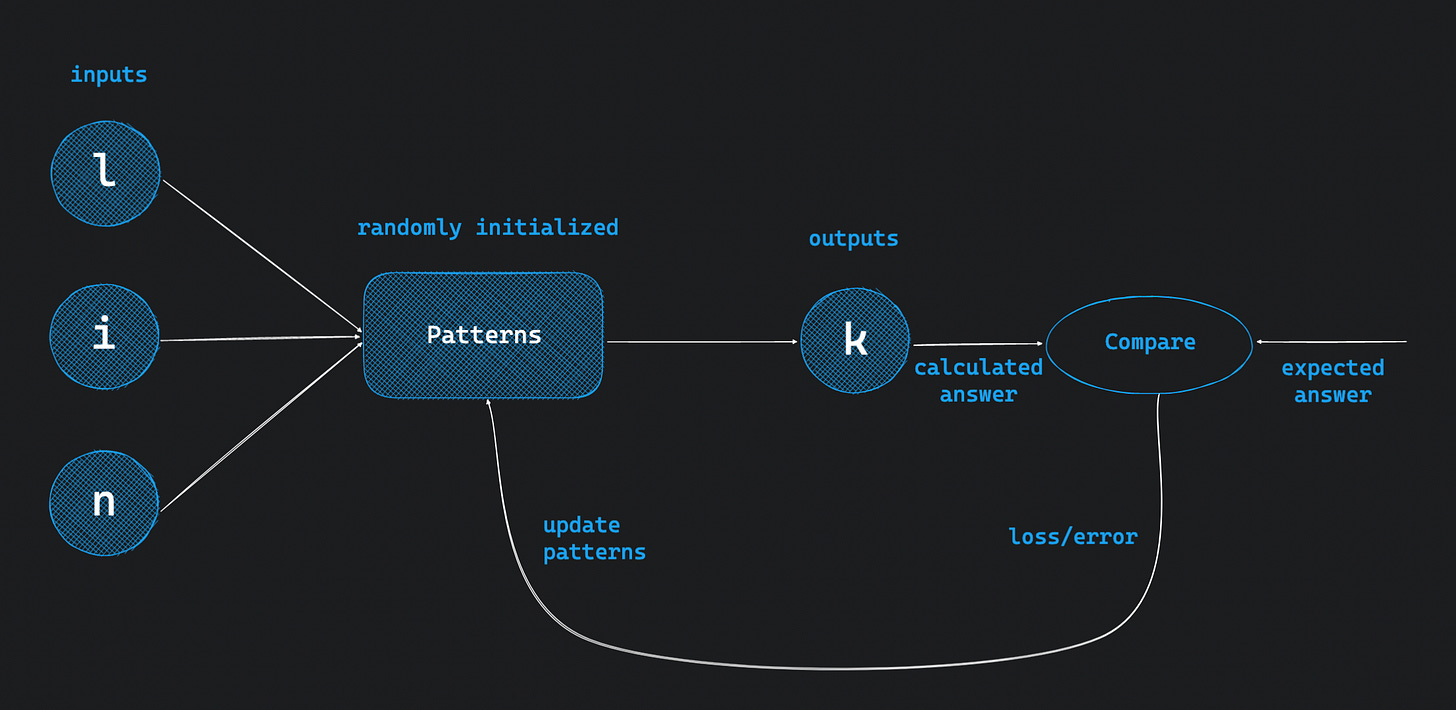

In plain English, neural networks are functions, with randomly initialized patterns, which predict the output from a given input. During initial iterations, they predict wrong answers given the randomly initialized patterns. For every iteration, we have to figure out the difference between the calculated answer and the expected answer. This difference is defined as the “loss” or “prediction error.”

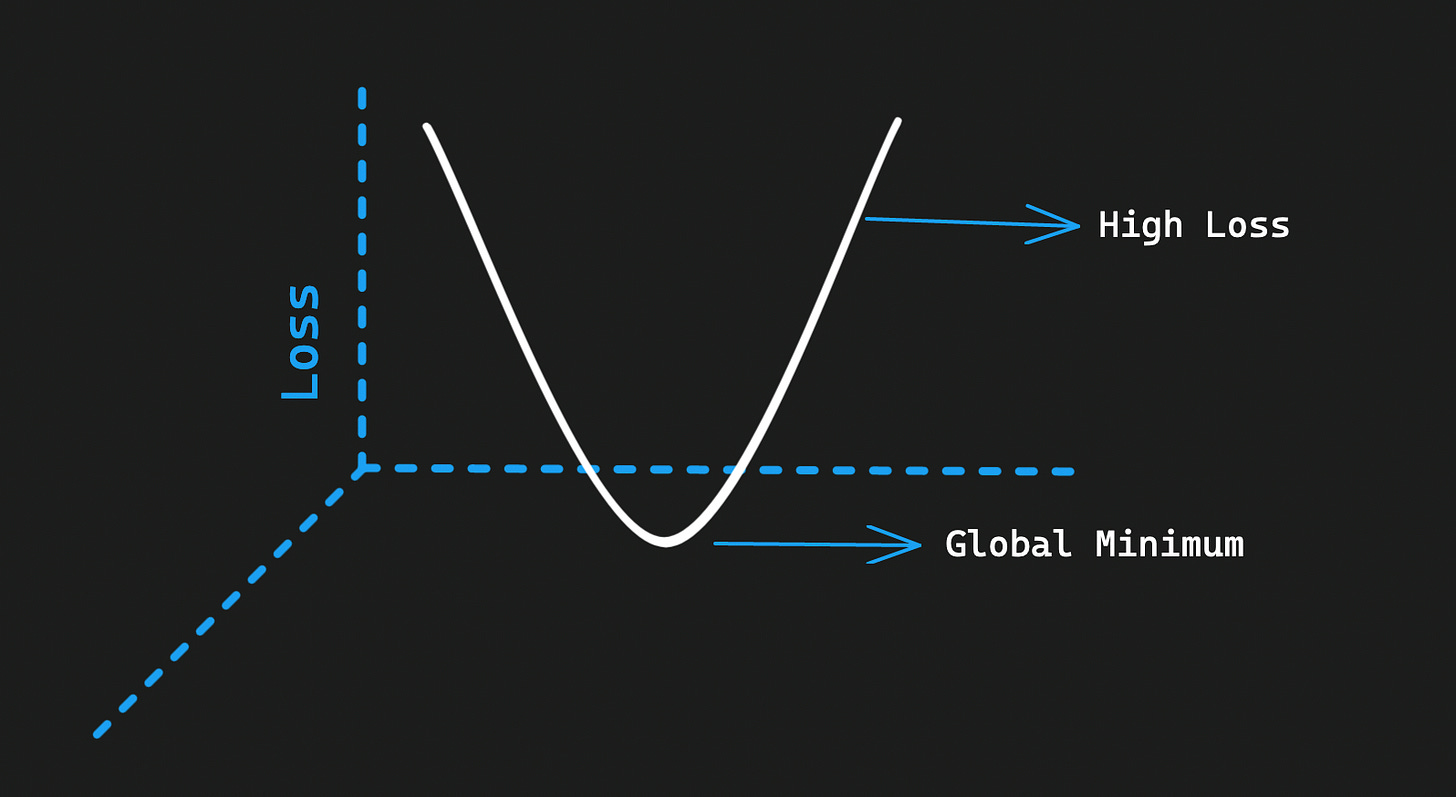

After every iteration, we simply update the patterns given the loss (gradient), until the loss is at its lowest aka the “global minimum.” Which is when we stop training the network. Because this technique relies on reducing or lowering the gradient, this is defined as “gradient descent.” This is how neural networks improve and learn.

You can play with basic neural networks over here.

Gradient descent as a fundamental technique enabled self-driving cars, autonomous weapons and SpaceX’s vertical launch Starship.

The genius neuroscientist Karl Friston in his 2006 paper introduced the world to his “free energy principle” that allows us to build predictive models. Flash: his model is difficult to grasp. But I’ll try to break it down into street English purely for the sake of our understanding. According to Friston, his Free Energy Principle (FEP) is based on a fundamental question, “if you are alive, what all behaviour must you show?“

The FEP ground rule is that all systems, irrespective of how simple or complex they are, from cells to organisms to humans to civilizations, exist to minimize prediction error between the states that they want to be in versus the states their sensory inputs inform them that they are in. In plain English, minimizing free energy implies minimizing surprise.

The human brain soaks magnitudes of information to make sense of the world. It generates its own dreams and inferences. The brain validates its hypothesis by running simulations. It updates its models subject to feedback from the sensory relays by minimizing prediction error.

Bayesian mathematics bases itself on predicting the next step by taking historical data into account. Bayesian logic says the brain is an “inference engine” that seeks to minimize “prediction error.” But this model has limitations for Friston. It only accounts for the interaction between beliefs and perceptions but has nothing to say about the body or action.

Friston employs the term “active inference“ to explain that organisms minimize surprises in (generally) two ways: absorb the surprise and update their models or execute the action to test the prediction.

This explanation is generic enough to fit any example including where I’m minimizing free energy to reach my office to achieve my next milestone or goal.

What I love about Friston is that in his own words, “it’s not sufficient to understand which synapses, which brain connections, are working improperly, you need to have a calculus that talks about beliefs.”

Friston asserts that the same math that applies to cellular differentiation—the process by which generic cells become more specialised—can also be applied to cultural dynamics.

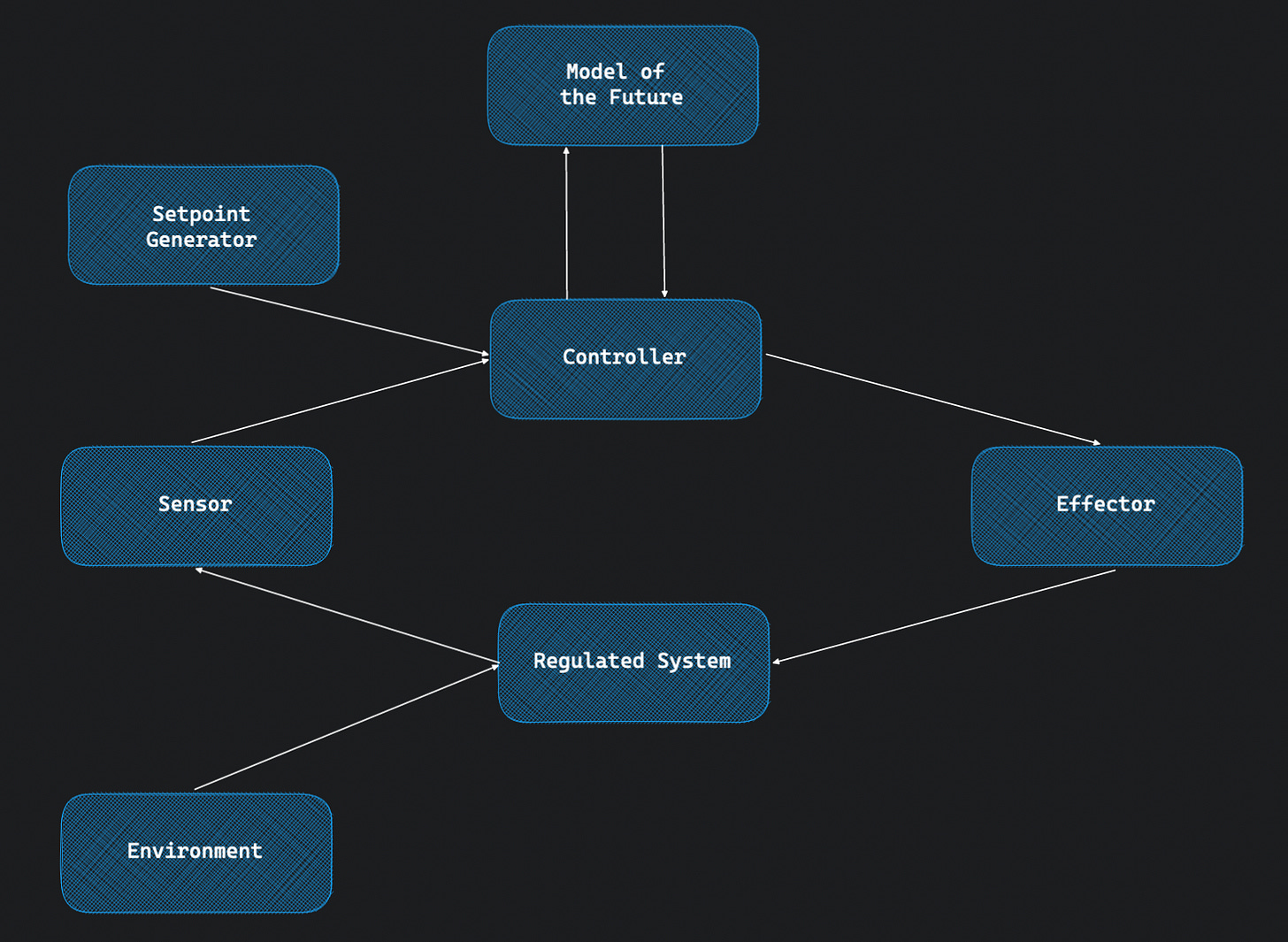

Every agent relies on a control circuit to help model its environment. Let’s generalize a control circuit using the following image.

A controller is a module that affects a (regulated) system using an effector and in turn, uses the sensory input to optimize itself further by minimizing the setpoint deviation.

Environment injects disturbances, or observations, into the regulated system.

Therefore, the controller has to constantly optimize itself to regulate the system. But how does it optimise itself? To perform this stunt, our control circuit needs to be able to model the environment in some way. But what way?

Let’s take the example of one of the simplest control circuits i.e. a thermostat. It’s a simple device that takes your ideal room temperature as an input, then measures the current room temperature and calculates by how much it has to be changed. If the temperature is low, then increase the heating in the room. If the temperature is high, then lower the heating.

But this control circuit i.e. the thermostat isn’t classified as an agent. What do we need to do to build its agency?

The answer is rather simple: let it control the temperature into the future, and not just at the moment. And to do that, it somehow needs to build a model of the future.

This can be achieved with basic differential calculus. Just give the thermostat the capability to predict the temperature a few steps ahead in time. And let it simulate or calculate how would the future differences in temperatures be affected subject to actions it plans to take in the moment. For every prediction that goes wrong, let the thermostat’s computation engine, or simply the computer, go back and update itself by how much it missed. Again, it’s just some differential calculus at play. If you are an engineer, then you know this is how the backpropagation algorithm works.

From there on, the thermostat is no longer optimizing for the current temperature, but instead the integral of set-point deviations of the future. This implies that if the thermostat decides to turn off the heating right now versus 5 minutes into the future, there are going to be multiple trajectories of predicted temperatures in the future.

This leads us to a branching universe into the future. And the thermostat decides which branch to prioritize or choose subject to its model. We can either choose to solve this using reinforcement learning or some other class of algorithms. If you are not an engineer, then briefly understand that RL algorithms essentially converge towards their terminal goals by deciding between exploration versus exploitation by using a reward function which gives a reward (a positive score) to the agent every time it chooses the right answer, or doesn’t choose the wrong answer. And consequently, a negative or no score, if it chooses the wrong answer.

Similar to how the human brain bakes a cookie for you every time you take a correct decision that takes you closer to the intended goal, to signal and motivate you to move ahead in the same direction. Or gives painful feedback if your decision drifts you away from your intended goal.

Once the thermostat can synthesize its own model of the future and learn to optimize that model, as and when required, it can become more powerful. For example, it can become more energy efficient.

From here on, it is in the calculations between these learning iterations that the magic happens. The thermostat will start developing beliefs about its current state and the relationship between its current actions and future actions. The agent will accept some of its beliefs and develop intentions. Eventually, it will convert these intentions into actions.

Therefore, we have given agency to a simple control circuit by simply letting it control the future.

From here on, our agent can become very smart and start modelling the environment in very intricate ways. Including it-self. And this state is classified as having achieved “sentience.” That is when an agent learns to model itself.

The thermostat can also start learning the nature and inaccuracies of its sensors and effectors. For example, it can figure out ways in which the sensor affects the heating because it has been installed next to the heater.

Or if someone in the room opens the windows in too short of a time, then the heating will become less efficient. It doesn’t matter that the thermostat doesn’t have eyes to see this person aka someone. But it would have developed some representation of this alien in the environment that is injecting these unwanted disturbances. And eventually, it would learn the behaviour of this person over some time. It is safe to assume, at this point, that the thermostat’s predictions have already taken into account the potential actions of the person in the room.

Going forward, it is possible that if you give this agent enough entanglement with the world, it will become even more sentient. For example, you might want to give the thermostat more sensory inputs like eyes to actually see the world. Or a nose to smell.

Going back to our control circuit diagram, let’s define the following terms.

The regulator learns from feedback loops.

The controller, with its ability to make predictions, models the future.

The agent simply refers to the controller with a setpoint generator.

Sentience is defined as the ability of an agent to model itself.

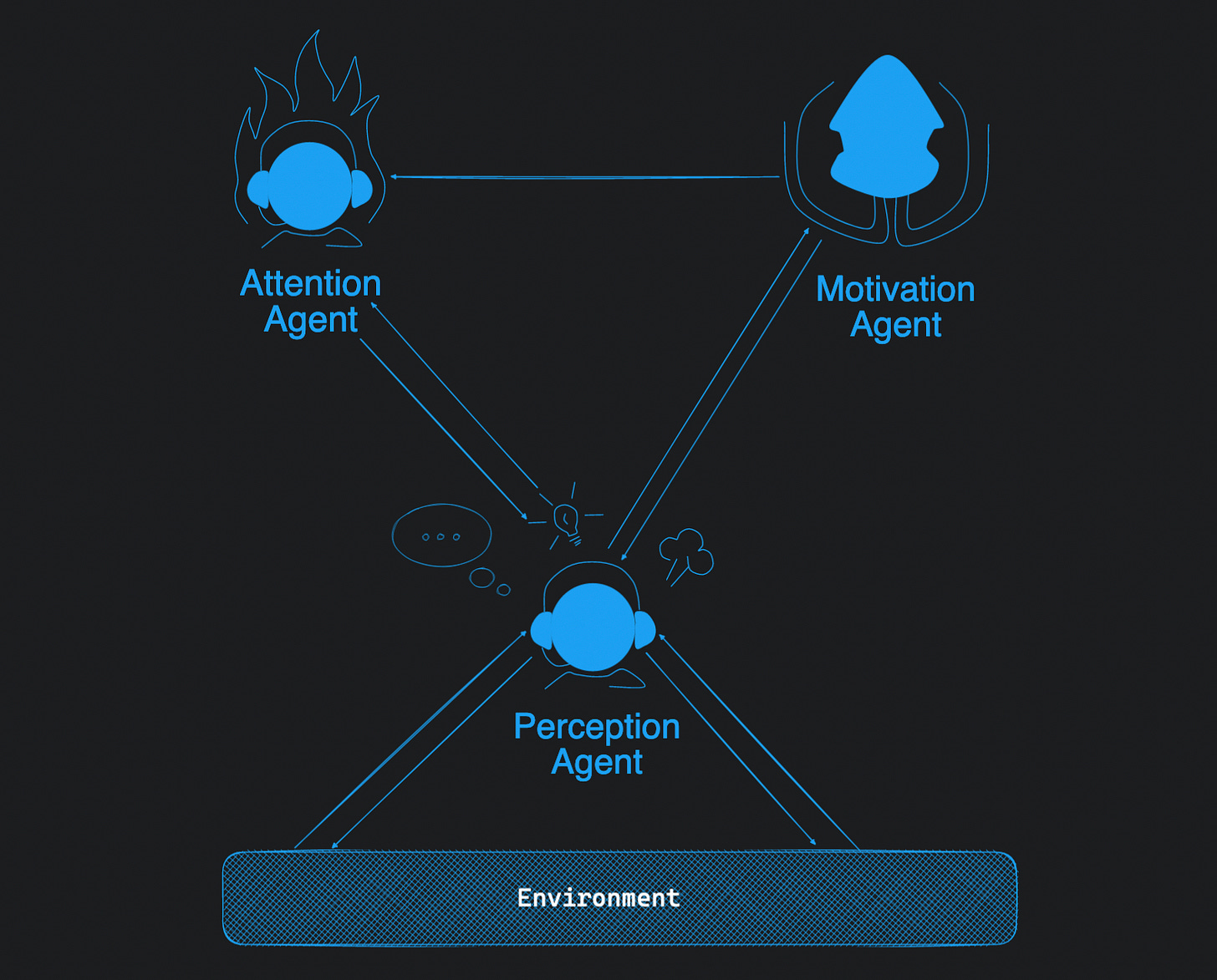

Similarly, we can create a rough model of our cognitive system consisting of different agents responsible for different jobs.

The attention agent operates on an indexed memory or a temporary cache. This agent tells itself the story about what it is doing.

The motivation agent assigns expected rewards to features. This is called “recognition” in our model of the mind. This enables tasks involving “learning” and “reasoning.”

The perception agent manages convergence via gradient descent, stochastic search and probability calculation.

If you turn off your attention agent, you become a sleepwalker. That implies you can still play the game except without paying attention. Like playing free chess. If you interrogate the sleepwalker about what it is doing, the explanation wouldn’t be coherent with the actual state of the entangled environment. But mostly a random (better word: decoherent) model.

Consciousness is fundamentally just a simulation of contents in your perception system. In other words, it is the control model of your attention. If you can’t even remember what you were paying attention to, how will you be conscious? I’ll explain this in detail in a separate post on consciousness.

The role of consciousness is to essentially prevent you from dreaming. And the brain does it by creating a coherent story that remains synchronized with your perception. If you shut down perception, even a conscious mind that creates its own models will drift into the dream state.

Fundamentally, the same software that generates your dreams at night also generates dreams during the day. But manages to keep them synchronized with your sensory inputs. At night, or during meditation, since you shut down your sensory inputs, you create arbitrary dreams. You can get a gist of similar ideas in my post on free will.

Always remember that the data being generated in the dream is not the observations of the real world. They are merely extrapolations of the observations of the real world. Dreams will never contain any actor, concept, scene or design that you haven’t seen before. But a new story, generated from those contents, that you haven’t seen before.